Sen. Wyden: Days of Considering Edge Platforms Neutral Are Over

Trying to determine online friend from foe, and who makes that call, is an enormous, perhaps impossible task, and implicates both online and traditional news and information outlets. But the problem starts with non-neutral social media platforms.

That was a big takeaway from the Hill Wednesday (Aug. 1).

"I just want to be clear, as the author of Sec. 230 [of the Communications Decency Act], the days when these 'pipes' are considered neutral are over because the whole point of 230 was to have a shield and a sword, and the sword hasn't been used," Sen. Ron Wyden (D-Ore.) said at a Senate Intelligence Committee hearing Wednesday.

Section 230 provides liability carve-outs for the content posted on social media platforms like Twitter and Facebook (the "pipes" in this case a designation more often reserved for ISPs, which are regulated) under the theory they were simply the online public square for those ideas and that to make them liable would blow up their business model.

The witnesses for the Intelligence Committee hearing on "foreign influence operations and their use of social media platforms" did not include any of the major social platforms being discussed. They were instead academics and researchers: Dr. Todd Helmus, senior behavioral scientist, RAND Corp.; Renee DiResta, director of research, New Knowledge; John Kelly, CEO, Graphika; Laura Rosenberger, director, Alliance for Securing Democracy at The German Marshall Fund of the United States; and Dr. Philip Howard, director, Oxford Internet Institute.

Rosenberger echoed the idea of edge provider non-neutrality: "These platforms are not neutral pipes. Information is not being served up without some kind of algorithm deciding, for most of the platforms, what is served up at the top."

Sen. Richard Burr (R-N.C.), chair of the committee, said nothing less than the integrity of democratic institutions is at stake. Burr summed up the challenge, asking, "How do you keep the good while getting rid of the bad?"

The smarter way to stay on top of the multichannel video marketplace. Sign up below.

Video: UK Politicians Say Facebook Creating 'Crisis in Democracy'

Burr said that was the fundamental question before not just the committee but the American people. He called it a complex problem that "intertwines" First Amendment freedoms, corporate responsibility, regulations, and the right of innovators to profit from their innovation.

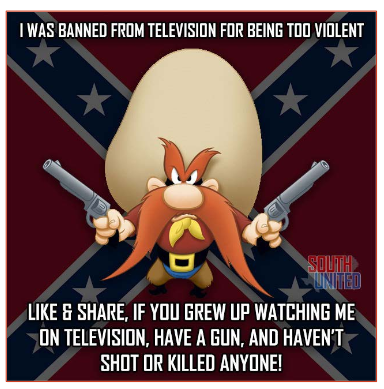

Sen. James Risch (R-Idaho) said he thought the takeaway from all the testimony was how difficult the problem is. "We know the problem," he said. "We have bad [foreign] actors putting out bad information. The difficulty is segregating those people from Americans who have the right to do this, whether or not it is disgusting or untrue or with a bad motive. It is protected by the First Amendment."

Who decides who are the bad actors, he asked, and how do you protect the anonymity of, say, activists in authoritarian regimes while trying to fight bad actors?

"How in the world do you do this?" Risch said. "The takeaway here has got to be that this is just an enormous, if not an impossible, thing."

Related: Pew Survey Finds Users Distrust Social Media Platforms

Sen. Susan Collins (R-Maine) pointed out that the traditional media were also part of the problem, unwittingly amplifying fake news and social media posts.

Rosenberger agreed. She pointed out that a Twitter account created by Russian meddler IRA focused on the NFL "take a knee" controversy had been cited by more than a dozen major news outlets, including the BBC, Huffington Post and Wired.

Collins said reading about such posts in credible sources make people more likely to believe them.

Sen. Mark Warner (D-Va.), vice chair of the Intelligence Committee, took aim at the edge, as well, but took some of the edge off.

Related: Sen. Warner Says Facebook Page Deletions Show Ongoing Election Meddling Threat

"All the evidence this Committee has seen to date suggests that the platform companies – namely, Facebook, Instagram, Twitter, Google and YouTube – still have a lot of work to do," Warner said, but then added, sounding like a parent disciplining an unruly child, "I’ve been hard on them – that’s true. But it’s because I know they can do better to protect our democracy. They have the creativity, expertise, resources and technological

capability to get ahead of these malicious actors."

And while Wednesday's hearing focused on academics and researchers, Warner said executives from Facebook, Twitter and Google will be in attendance for a hearing Sept. 5 to provide "the plans they have in place, to press them to do more, and to work together to address this challenge."

Contributing editor John Eggerton has been an editor and/or writer on media regulation, legislation and policy for over four decades, including covering the FCC, FTC, Congress, the major media trade associations, and the federal courts. In addition to Multichannel News and Broadcasting + Cable, his work has appeared in Radio World, TV Technology, TV Fax, This Week in Consumer Electronics, Variety and the Encyclopedia Britannica.