State AGs Launch Instagram Investigation

Said they are looking for any unlawful practices by parent, Meta

A bipartisan group of state attorneys general have launched an investigation into Meta's (formerly Facebook) Instagram social media platform for what they say was the company's promotion of that platform to children and youth despite knowing that could lead to physical and mental health harms.

The investigation is being co-led by Massachusetts Attorney General Maura Healey and includes AGs from California, Florida, Kentucky, Massachusetts, Nebraska, New Jersey, Tennessee, and Vermont.

“Facebook, now Meta, has failed to protect young people on its platforms and instead chose to ignore or, in some cases, double down on known manipulations that pose a real threat to physical and mental health – exploiting children in the interest of profit,” said Healey. “As Attorney General it is my job to protect young people from these online harms."

She said they would try to identify any unlawful practices and end the company's alleged abuses for good. "Meta can no longer ignore the threat that social media can pose to children for the benefit of their bottom line," she said.

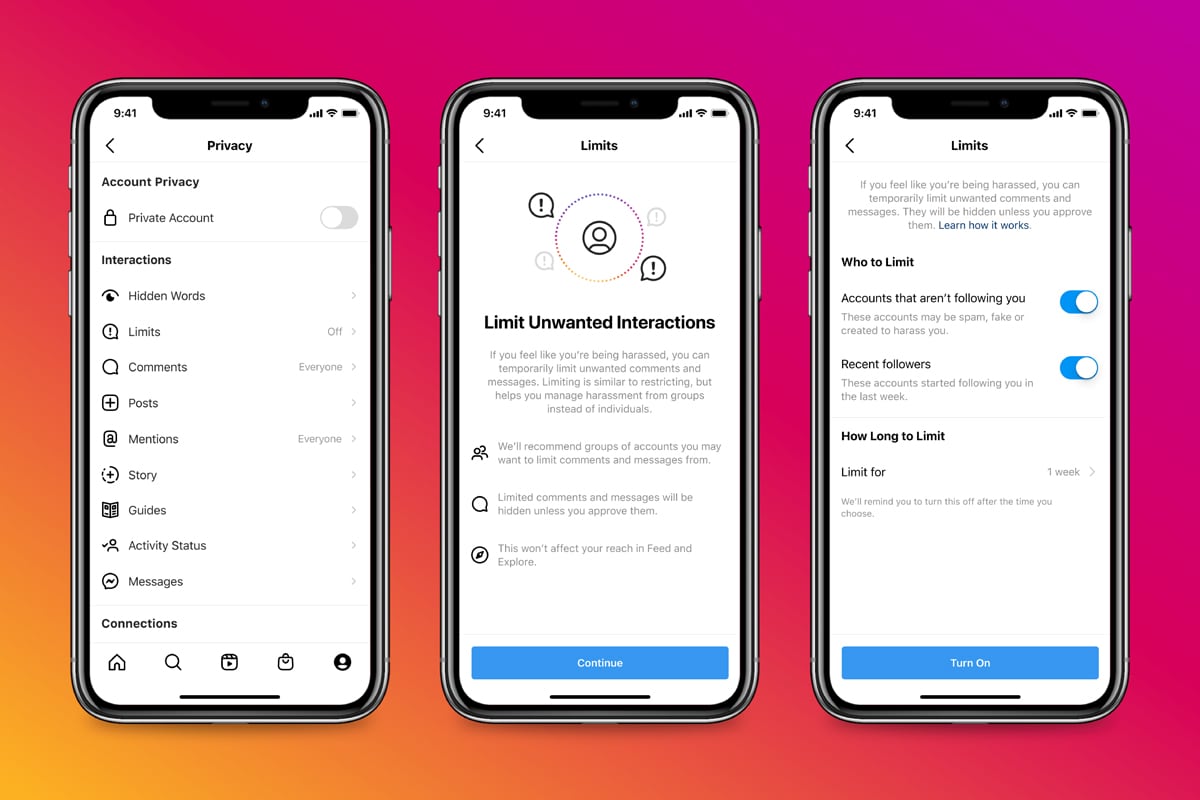

The investigation will target what techniques, if any, Meta used to boost the frequency and duration of engagement by younger users and the resulting harms.

Also: Blmenthal Presses TikTok, YouTube, SnapChat for Documents

Like the Hill hearings that preceded it, the investigation stems in part from Facebook internal research handed over to Congress by whistleblower Frances Haugen.

"Today’s announcement follows recent reports revealing that Meta’s own internal research shows that using Instagram is associated with increased risks of physical and mental health harms on young people, including depression, eating disorders, and even suicide," Healey's office said.

Healey also helped lead a coalition of 44 AGs last May that urged Facebook not to launch a kids' version of Instagram. The company ultimately agreed to pause the effort, though not to pull the plug entirely.

"These accusations are false and demonstrate a deep misunderstanding of the facts," said Meta in a statement. "While challenges in protecting young people online impact the entire industry, we’ve led the industry in combating bullying and supporting people struggling with suicidal thoughts, self-injury, and eating disorders. We continue to build new features to help people who might be dealing with negative social comparisons or body image issues, including our new 'Take a Break' feature and ways to nudge them towards other types of content if they're stuck on one topic. We continue to develop parental supervision controls and are exploring ways to provide even more age-appropriate experiences for teens by default."

“Combined with our Congressional scrutiny, this investigation will shine a bright light on Instagram’s profiting from harm to kids," said Sen. Richard Blumenthal (D-Conn.), chair of the Senate Consumer Protection Subcommittee, who led a hearing into Haugen's allegations and documents. "Facebook can no longer hide or conceal facts that parents need and deserve to know. Mark Zuckerberg must make a choice: either Facebook comes clean on its own, or this bipartisan group of state attorneys general will show the world even more ugly truths.”

“Facebook must release its full research, give access to independent researchers, and support meaningful legislation. I look forward to seeing the results of this important investigation and continuing to work with colleagues on both sides of the aisle on legislation to protect kids online.” ■

The smarter way to stay on top of the multichannel video marketplace. Sign up below.

Contributing editor John Eggerton has been an editor and/or writer on media regulation, legislation and policy for over four decades, including covering the FCC, FTC, Congress, the major media trade associations, and the federal courts. In addition to Multichannel News and Broadcasting + Cable, his work has appeared in Radio World, TV Technology, TV Fax, This Week in Consumer Electronics, Variety and the Encyclopedia Britannica.