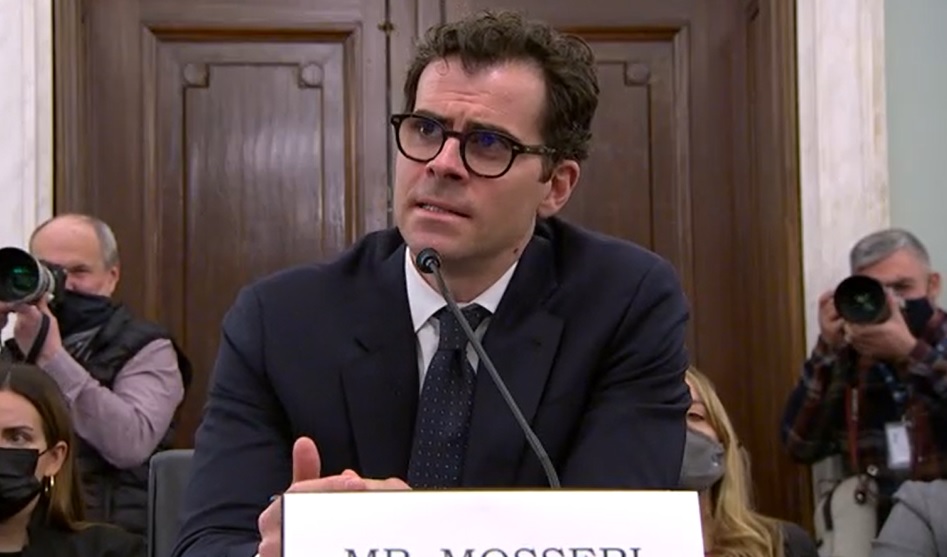

Instagram's Adam Mosseri Faces Barrage of Hill Critics

Sen. Blumenthal says company has squandered any trust needed for self-reg

Congress continued its bipartisan beatdown of Big Tech Wednesday in a Senate Consumer Protection Subcommittee hearing featuring Instagram CEO Adam Mosseri, with more hammering of the industry expected the following day in a House Consumer Protection Subcommittee hearing titled "Holding Big Tech Accountable: Legislation to Build a Safer Internet" and a Senate Communications Subcommittee hearing the same day, “Disrupting Dangerous Algorithms: Addressing the Harms of Persuasive Technology.”

At Wednesday's hearing, co-chaired by Committee Chairman Richard Blumenthal (D-Conn.) and Marsha Blackburn (R-Tenn.), Mosseri repeatedly took flak from both sides of the aisle. The hearing was prompted by Facebook whistleblower revelations about internal research showing some negative impact of Instagram on young people, though the company has countered that that research showed more people said it was a positive influence.

For his part, Mosseri said: "I’m proud of our work to help keep young people safe, to support young people who are struggling, and to empower parents with tools to help their teenagers develop healthy and safe online habits." Mosseri, on the eve of the hearing, announced several changes he said would make the platform even safer.

But he also suggested that the issue was bigger than his company, arguing that "more U.S. teens are using TikTok and YouTube than Instagram."

That has hardly assuaged those legislators, who argue Instagram parent Meta (formerly Facebook), is emblematic of the dominant Big Tech players that have grown without sufficient oversight of how they got that way and what they can, and have, done with their massive power. In response, Facebook has taken out an ad campaign essentially calling for tailored regulation of itself and others, though not the elimination of their Sec. 230 immunity from civil liability over third party content, something legislators have kept on the table.

Blumenthal raked Instagram over the coals in his opening, saying social media fanned the flames of a mental health crisis. He said proposals of self-regulation depended on trust and Instagram and other social media giants had squandered that trust. He said he was stunned by the lack of action by Meta after he told the company of the subcommitee's test using a made-up teen account that found it flooded with dangerous pro-anorexia and eating disorder info. He said a similar test this week yielded the same results.

Also: House Dems Say Big Tech Is Dangerous to Democracy

The smarter way to stay on top of the multichannel video marketplace. Sign up below.

Blumenthal said the time for self-regulatory bodies, as Mosseri and Instagram proposed, was over and that legislation and regulation were coming. Sen. Ed Markey (D-Mass.) agreed that a self-regulatory body would not fill the bill given revelations about the company.

Sen. Blackburn echoed Blumenthal, saying that she was frustrated that Meta had not taken action, but simply continued to say that its platform needed improvement, or more tools in the toolkit, or that data would secure, then offered half-measures that didn't cut it. She said those measures were too little too late, and put some flesh on the bones of coming regulation, saying legislators were working on children's privacy, online privacy, data security, and Sec. 230 reforms.

“We should no longer permit Instagram to manipulate and harm the nation’s youth," said Jeff Chester, executive director of the Center for Digital Democracy of Mosseri's testimony. "This company has demonstrated time and again it cannot be trusted to place the interests of children ahead of its quest to reap tremendous revenues,” said Jeff Chester, executive director, Center for Digital Democracy, Washington, D.C. “Only Congress can protect children and teens online by passing a comprehensive law which ensures platforms such as Instagram operate responsibly.”

Other takeaways from the hearing:

Mosseri bristled at the suggestion by Blumenthal that its content was addictive, saying that was not the case.

Blumenthal asked if Mosseri would commit to stop developing Instagram Kids for children under 13, a project it paused after Hill pushback. Mosseri would not, saying the idea for an Instagram for 10-12-year-olds was meant to solve a problem, which is kids wanting to access the older version.

Blumenthal cited a just-released Surgeon General's report that he said provided evidence of the negative impact of social media (and video games) on mental health. The report concluded that Big Tech "must step up and take responsibility for creating a safe digital environment for children and youth."

An emotional Sen. Amy Klobuchar (D-Minn.) said Instagram was using kids as cash cows and that its interests and those of parents and children were diametrically opposed.

In response to probing from Klobuchar, Mosseri conceded that he assumed it was accurate that a company executive said losing teen users would be an existential threat.

Blumenthal said Mosseri's suggestion that companies should have to earn Sec. 230 protection has some appeal to him, but it was not government regulation. He asked if Mosseri would support applying the UK's Children's Code to the U.S. Mosseri said he would and that enforcement should be at the federal level. Mosseri did not say he would explicitly support giving users a private right of action.

Mosseri denied that the company's marketing budget went from $67 million to $390 million with the majority going to teens. Klobuchar pointed out that the New York Times story that reported that budget increase said "much" not "majority" went to woo teens and asked if he denied that. He said he did not know how much of the budget it was but would get back to her.

Mosseri would not agree to support legislation by Sen. Ed Markey (D-Mass.) banning ads targeted to kids, saying that some such ads can be helpful and that its approach is to prevent some types of targeted ads to teens, weight-loss ads for example.

Asked if he would hire more human reviewers-- a suggestion from whistleblower Farnces Haugen--Mosseri nuanced his answer, saying people are better at nuance, while technology is better at scale.

"Today's hearing with Adam Mosseri was just more of the same from Meta: evasions, empty promises, and half-baked safety measures on Instagram that should've been in place all along," said Josh Golin. executive director of Fairplay. "The bottom line is this: Instagram's advertising business is harming children, and nothing meaningful has been done to change that. It is shocking that even disturbing practices exposed by the Senators at the Antigone Davis hearing—that new accounts were fed content promoting eating disorders—still exist two months later. It's clear that self-regulation will not work. Congress must act now and regulate Big Tech to protect children." ■

Contributing editor John Eggerton has been an editor and/or writer on media regulation, legislation and policy for over four decades, including covering the FCC, FTC, Congress, the major media trade associations, and the federal courts. In addition to Multichannel News and Broadcasting + Cable, his work has appeared in Radio World, TV Technology, TV Fax, This Week in Consumer Electronics, Variety and the Encyclopedia Britannica.